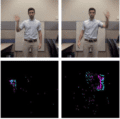

A Low Power, Fully Event-Based Gesture Recognition System

IBM Research

This dataset was used to build the real-time, gesture recognition system described in the CVPR 2017 paper titled “A Low Power, Fully Event-Based Gesture Recognition System.” The data was recorded using a DVS128. The dataset contains 11 hand gestures from 29 subjects under 3 illumination conditions and is released under a Creative Commons Attribution 4.0 license….

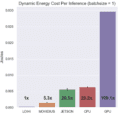

BR Keyword Spotting Power Benchmarks

Applied Brain Research

This dataset contains power benchmarking code for running a simple two-layer, 256-neuron-per-layer neural network keyword spotter on both neuromorphic and conventional hardware devices….

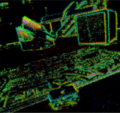

EV-IMO: An Event Camera Motion Segmentation Dataset

Perception and Robotics Group, University of Maryland

a collection of indoor datasets for motion segmentation and ego-motion estimation gathered with a variety of event-based sensors. The dataset features 6 DoF poses for Camera and Independently Moving Objects updated at 200 Hz, and pixel-wise object masks at 40 Hz. Depth maps and classical camera frames are also available for most sequences….

NatSGD

NatSGD: A Dataset with Speech, Gestures, and Demonstrations for Robot Learning in Natural Human-Robot Interaction

Recent advancements in multimodal Human-Robot Interaction (HRI) datasets have highlighted the fusion of speech and gesture, expanding robots’ capabilities to absorb explicit and implicit HRI insights. However, existing speech-gesture HRI datasets often focus on…

Neuromorphic-Caltech101 (N-Caltech101) dataset

Garrick Orchard and others

A spiking version of the original frame-based Caltech101 dataset. The N-Caltech101 dataset was captured by mounting the ATIS sensor on a motorized pan-tilt unit and having the sensor move while it views Caltech101 examples on an LCD monitor as shown in the video below….

Tonic

Institute of Neuromorphic Engineering

A tool to facilitate the download, manipulation and loading of event-based/spike-based data. It's like PyTorch Vision but for neuromorphic data!…

IBM Research

This dataset was used to build the real-time, gesture recognition system described in the CVPR 2017 paper titled “A Low Power, Fully Event-Based Gesture Recognition System.” The data was recorded using a DVS128. The dataset contains 11 hand gestures from 29 subjects under 3 illumination conditions and is released under a Creative Commons Attribution 4.0 license….

BR Keyword Spotting Power Benchmarks

Applied Brain Research

This dataset contains power benchmarking code for running a simple two-layer, 256-neuron-per-layer neural network keyword spotter on both neuromorphic and conventional hardware devices….

EV-IMO: An Event Camera Motion Segmentation Dataset

Perception and Robotics Group, University of Maryland

a collection of indoor datasets for motion segmentation and ego-motion estimation gathered with a variety of event-based sensors. The dataset features 6 DoF poses for Camera and Independently Moving Objects updated at 200 Hz, and pixel-wise object masks at 40 Hz. Depth maps and classical camera frames are also available for most sequences….

NatSGD

NatSGD: A Dataset with Speech, Gestures, and Demonstrations for Robot Learning in Natural Human-Robot Interaction

Recent advancements in multimodal Human-Robot Interaction (HRI) datasets have highlighted the fusion of speech and gesture, expanding robots’ capabilities to absorb explicit and implicit HRI insights. However, existing speech-gesture HRI datasets often focus on…

Neuromorphic-Caltech101 (N-Caltech101) dataset

Garrick Orchard and others

A spiking version of the original frame-based Caltech101 dataset. The N-Caltech101 dataset was captured by mounting the ATIS sensor on a motorized pan-tilt unit and having the sensor move while it views Caltech101 examples on an LCD monitor as shown in the video below….

Tonic

Institute of Neuromorphic Engineering

A tool to facilitate the download, manipulation and loading of event-based/spike-based data. It's like PyTorch Vision but for neuromorphic data!…